On this website, we publish two high-quality street-level datasets from the forest and the city center, acquired with our high-performance portable mobile mapping system (MMS) BIMAGE Backpack. Both datasets will be freely available for scientific use. Both datasets will be available in July 2021, in conjunction with the publication of our ISPRS paper, "Open Urban and Forest Datasets from a High-Performance Mobile Mapping Backpack – A Contribution for Advancing the Creation of Digital City Twins."

Abstract

Both datasets, from a city centre and a forest represent area-wide street-level reality captures which can be used e.g. for establishing cloud-based frameworks for infrastructure management as well as for smart city and forestry applications. The quality of these data sets has been thoroughly evaluated and demonstrated. For example, georeferencing accuracies in the centimetre range using these datasets in combination with image-based georeferencing have been achieved. Both high-quality multi sensor system street-level datasets are suitable for evaluating and improving methods for multiple tasks related to high-precision 3D reality capture and the creation of digital twins. Potential applications range from localization and georeferencing, dense image matching and 3D reconstruction to combined methods such as simultaneous localization and mapping and structure-from-motion as well as classification and scene interpretation.

The paper is available under: https://doi.org/10.5194/isprs-archives-XLIII-B1-2021-125-2021

Download

The datasets can be downloaded from the following link: https://drive.switch.ch/index.php/s/d0OumqXRiJToGXv

In addition to the two data sets, detailed information about the data structure and data formats is provided as a PDF file.

Please consider that the data is available for academic and research purposes only.

Dataset Description

Here, we describe the two backpack MMS datasets that we publish. We captured both datasets in challenging environments. The first dataset is from a test site in the city centre and the second dataset is from another test site in a forest.

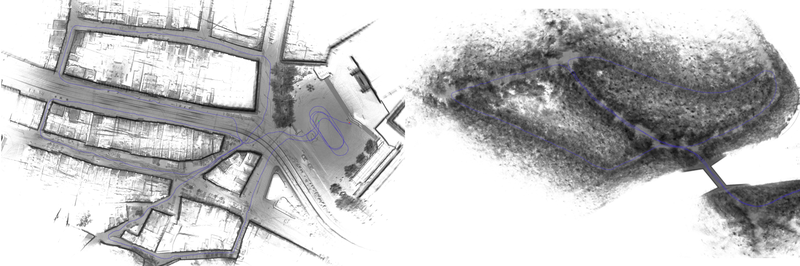

Both datasets contain a) image data with undistorted equidistant and anonymized images from the individual panoramic camera heads as well as the image timestamps, b) LiDAR data represented as timestamped point clouds in the sensor coordinate frame, c) navigation data with GNSS raw observations from the BIMAGE Backpack as well as from the reference station and IMU raw data.

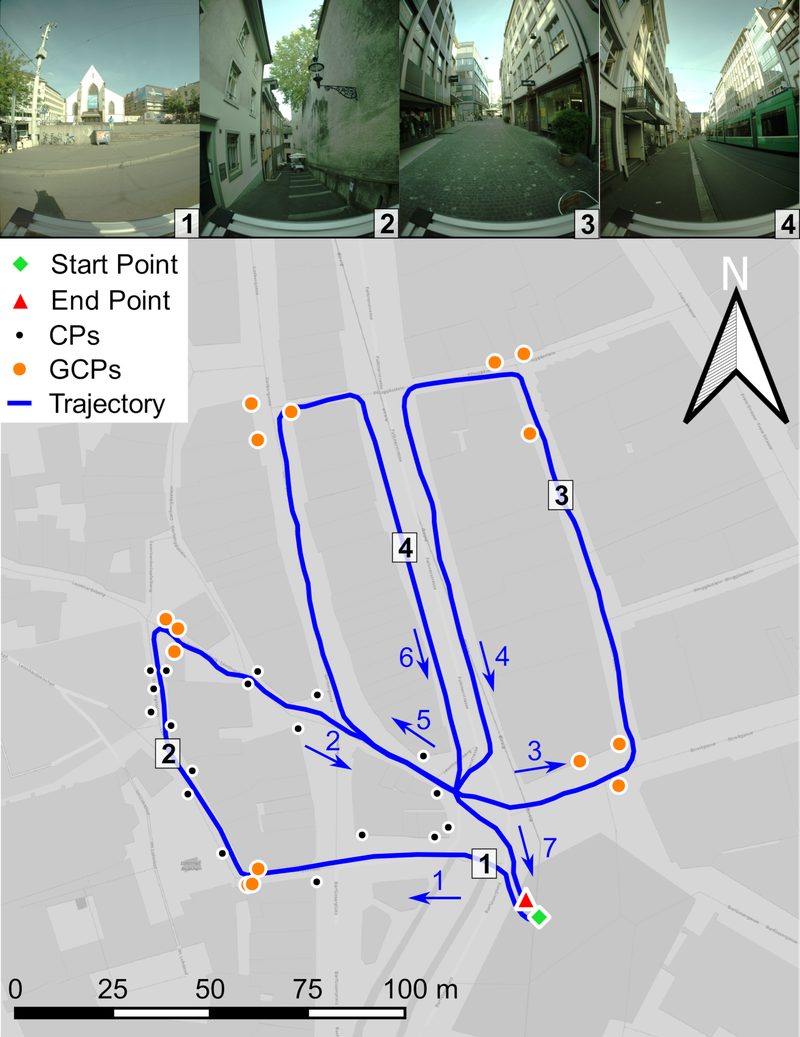

City Centre

The first dataset was acquired in the city centre of Basel, Switzerland. The 800 m loop-shaped trajectory was recorded in 24 minutes. It includes different road and path widths including a large square with good GNSS reception for system initialization. By contrast, it also includes narrow alleys only accessible to pedestrians with steps and slopes up to 16 %. Wide pedestrian promenades with shops on both sides dominate other parts of the trajectory. In addition, there is a main traffic axis through the city centre with busy tram and bicycle traffic.

The dataset ‘city centre’ contains 721 panoramic images, approx. 840 million LiDAR points, GNSS data as available and IMU data. We provide 15 ground control points (GCPs) arranged in groups of three and 18 check points (CPs) along the first loop of the trajectory (see map below). Most of the GCPs and CPs are well defined natural reference points, but some were marked with photogrammetric targets. The reference points were determined by tachymetry and have a 3D standard deviation of 5 mm.

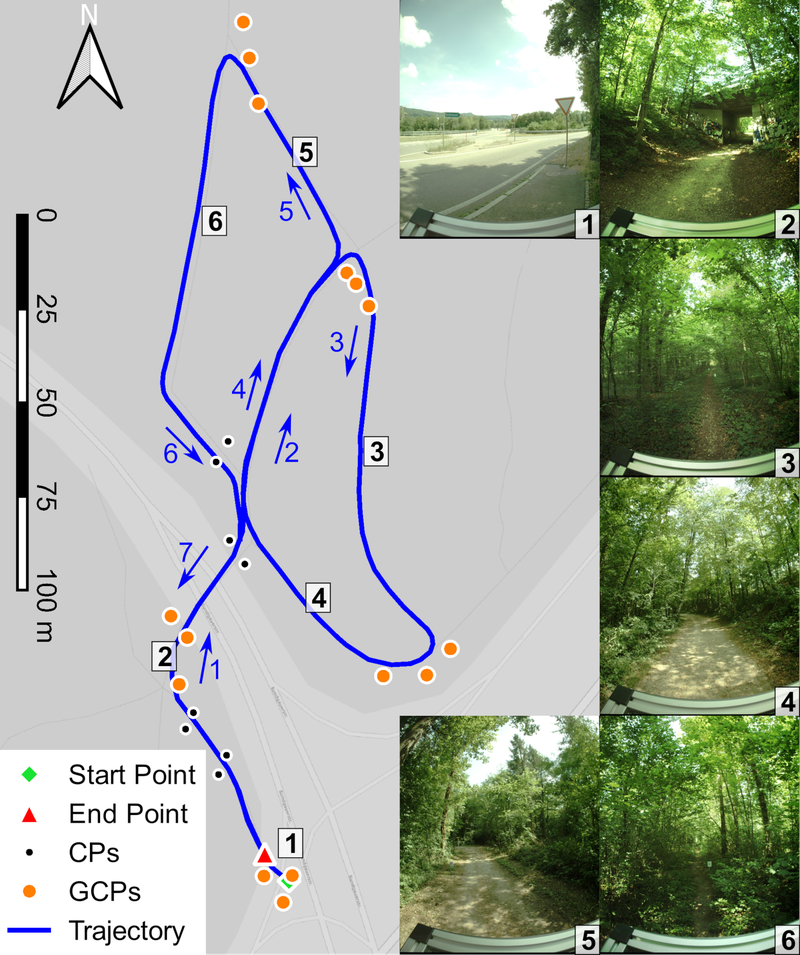

Forrest

The second dataset was acquired in a partially dense forest. Its trajectory length amounts to 740 m and the data capture required 25 minutes. It also incorporates an area with good GNSS reception at a nearby highway exit for system initialization. Furthermore, the forest path leads through a road underpass. Narrow paths only accessible to pedestrians with dense vegetation at ground level dominate the scenery in images 3 and 6 of the following Figure. In addition, the trajectory also includes drivable forest roads with less dense vegetation. The ‘forest’ dataset includes 843 panoramic images, approx. 850 million LiDAR points and navigation data in the scope of the first dataset. We provide 15 GCPs arranged in groups of three and 8 CPs along the first segment of the trajectory (see map below). All points are marked with photogrammetric targets and fixed either on trees or on driven-in pillars. The reference points were determined by tachymetry with closed polygons have a 3D standard deviation of 5 mm.