Designing human–machine interactions to be natural and intuitive.

Research focus areas

In the Human–Computer Interaction (HCI) research field, we investigate how people interact with digital systems — today and in the future. Our goal is to design interactions and interfaces that are natural, user-friendly, and intuitive. To achieve this, we work with a wide range of modern technologies and employ diverse modalities and forms of interaction:

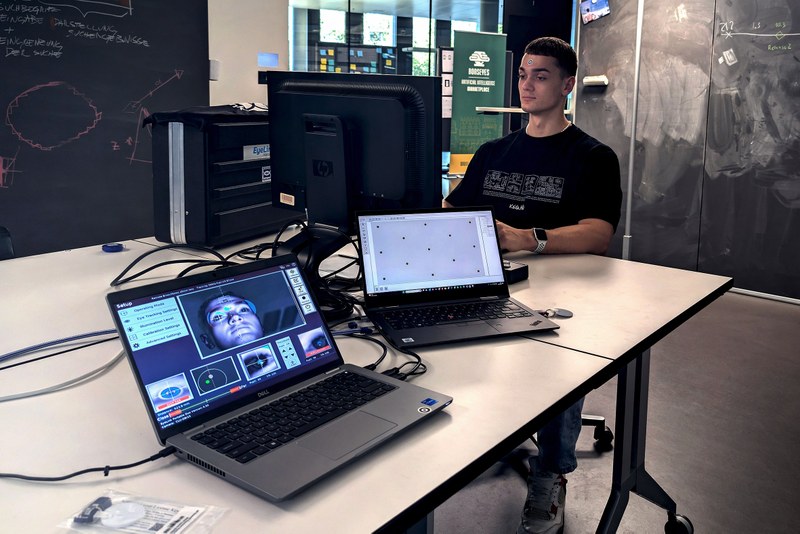

Eye tracking to analyse visual attention

Voice interaction to control systems through spoken language

Speech interaction and conversational interfaces with virtual assistants and companions

Gesture-based interaction for touchless interfaces

Projection-based interactive displays

Brain–computer interfaces to capture cognitive states and intentions

Adaptive displays

Human–AI interaction to design comprehensible and trustworthy AI systems

Human–robot interaction, including social robots and workplace robotics

Ubiquitous computing, embedding digital technologies seamlessly into everyday life

Information and data visualisation: development of visual representations of complex content or datasets to make information understandable, accessible, and interpretable — including both static visualisations and interactive dashboards

Our approach is interdisciplinary. We combine computer science, cognitive science, design, and ethics to develop technologies aligned with users’ needs.

In our projects, we design, develop, and evaluate innovative, human-centred interaction solutions — for example in health, education, work, leisure, industry, and public spaces.

Services

We offer a comprehensive range of high-precision eye-tracking systems and software solutions suitable for cognitive experiments, human–computer interaction studies, and usability testing — including portable options for on-site data collection.

Contact us

For further information or to discuss potential collaboration, please contact Thekla Müller.

Infrastructure

Remote Eye Tracker: EyeLink 1000 Plus

Eye Tracker: EyeLink Portable Duo

Software

Remote Eye Tracker: Tobii X1 Light Eyetracker

Apple Vision Pro

Hololens 2

HTC Vive Pro Eye

Meta Quest Pro

Eye-Tracking Studies Using Webcam and Smartphone

Degree programmes offerings

Continuing education offerings

Contact us

For further information about the FHNW School of Computer Science or to discuss potential collaboration opportunities, please contact us:

Prof. Dr. Arzu Çöltekin

- Phone

- +41 56 202 84 73 (Direct)

- arzu.coltekin@fhnw.ch

Our School